The Laplace Transform Turns Memory Into Algebra

The Fourier transform asks what frequencies live inside a signal. The Laplace transform asks a related but broader question: how much of the signal remains after you discount the future exponentially?

For a function $f(t)$ defined for $t\ge 0$, the Laplace transform is

The factor $e^{-st}$ is a fading memory. Large $s$ cares mostly about the near future. Small $s$ lets distant time matter. If $s$ is complex, the weight also oscillates, connecting Laplace back to Fourier.

live slideshowessay-2026-laplace-transform-turns-memory-into-algebra-1.mcs

param decay = 0.85

background = BLACK

camera = Camera(4b)

let signal = |t| 0.75 * sin(3 * t) * exp(-0.22 * t)

let weight = |t, decay| exp(-decay * t)

let WeightCurve = |decay| block {

. stroke{ORANGE, 2} ExplicitFunc(|t| weight(t, decay), [0, 3.3, 220])

}

let Diagram = |decay| block {

. stroke{GRAY, 1} Line(start: [-0.2, 0, 0], end: [3.4, 0, 0])

. stroke{BLUE, 3} ExplicitFunc(|t| signal(t), [0, 3.3, 220])

. WeightCurve(decay)

. color{WHITE} center{[1.6, 1.35, 0]} Text("exponential memory", 0.88)

}

mesh diagram = Diagram($decay)

"forget faster"

decay = 1.8

play Lerp(1.4)The orange curve is the memory weight. As it decays faster, the transform pays less attention to later parts of the blue signal. The number $F(s)$ is the weighted area.

Differentiation becomes multiplication

The Laplace transform is useful because it handles derivatives beautifully. If $F(s)$ is the transform of $f(t)$, then

The derivative in time becomes multiplication by $s$, with a correction for the initial value.

That correction is not a nuisance. It is exactly what you want in differential equations, because initial conditions are part of the problem.

Consider the simple equation

where $u(t)$ is an input and $x(t)$ is the state. Taking the Laplace transform gives

Solving for $X(s)$,

A differential equation has become algebra.

The denominator is the system

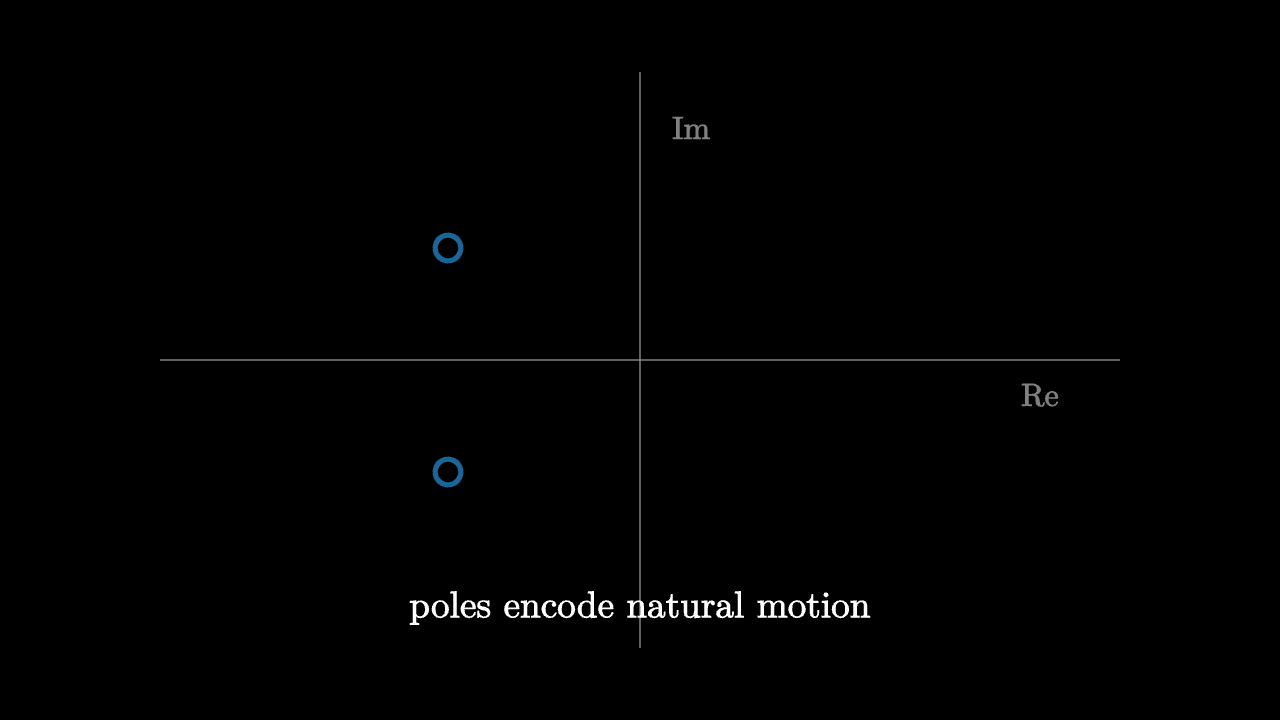

The factor $s+a$ is not just a symbol. It describes the natural decay of the system. If the input is zero, the solution is proportional to $e^{-at}$. The pole at $s=-a$ remembers that decay rate.

For more complicated linear systems, the denominator of the Laplace-domain expression encodes the modes of the system. Poles tell you whether responses decay, oscillate, or grow.

This is why engineers draw pole-zero diagrams. They are not decorative. They are maps of the system's possible behaviors.

imageessay-2026-laplace-transform-turns-memory-into-algebra-2.mcs

background = BLACK

camera = Camera(4b)

mesh diagram = block {

. stroke{GRAY, 1} Line(start: [-3, 0, 0], end: [3, 0, 0])

. stroke{GRAY, 1} Line(start: [0, -1.8, 0], end: [0, 1.8, 0])

. stroke{BLUE, 3} center{[-1.2, 0.7, 0]} Circle(0.08)

. stroke{BLUE, 3} center{[-1.2, -0.7, 0]} Circle(0.08)

. color{WHITE} center{[0, -1.55, 0]} Text("poles encode natural motion", 0.84)

. color{GRAY} center{[2.5, -0.22, 0]} Text("Re", 0.72)

. color{GRAY} center{[0.32, 1.45, 0]} Text("Im", 0.72)

}

"poles"

play Fade(0.6)Convolution becomes multiplication

Linear time-invariant systems respond to inputs by convolution:

where $h$ is the impulse response. In the Laplace domain, this becomes

This is the same miracle as Fourier analysis. Complicated accumulation in time becomes multiplication after a transform. The transform chooses coordinates in which the system acts independently on each exponential mode.

Why exponentials are the right probes

The function $e^{st}$ is an eigenfunction of differentiation:

That one equation explains the whole method. Linear differential equations are built from differentiation and addition. Exponentials turn differentiation into multiplication, so they diagonalize the behavior of the system.

The Laplace transform measures a signal against decaying exponentials because those are the shapes linear systems understand.

Seen this way, the transform is not a bag of table entries. It is a change of viewpoint. Time-domain behavior can be tangled, with memory and accumulation. In the Laplace domain, the same behavior becomes algebraic structure: poles, zeros, multiplication, and division.

The cost is abstraction. The reward is that a moving system becomes a static expression.